If you are on these versions, you may want to upgrade as soon as possible. We have also deployed a fix for a different oob read vulnerability in TF 1.13.0, which will be included in TensorFlow 2.11. This vulnerability could cause a server to crash if a user visited a malicious website when using a vulnerable version of TF. You can upgrade to TensorFlow 1.13.0 to protect your server from these vulnerabilities. We have patched this issue in GitHub commit 2ad4b3e15f0989a7f5aac0b28f76d25a4e2e63. The fix will be included in TensorFlow 2.11. We will also cherry-pick this commit on TensorFlow 2.10.1, 2.9.3, and TensorFlow 2.8.4, as these are also affected and still in supported range. If you are on these versions, you may want to upgrade as soon as possible. We have also deployed a fix for a different oob read vulnerability in TF 1.13.0, which will be included in TensorFlow 2.11. This vulnerability could cause a server to crash if a user visited a malicious website when using a vulnerable version of TF. You can upgrade to TensorFlow 1.13.0 to protect your server from these vulnerabilities. We have also patched this issue in GitHub commit 2ad4b3e15f09

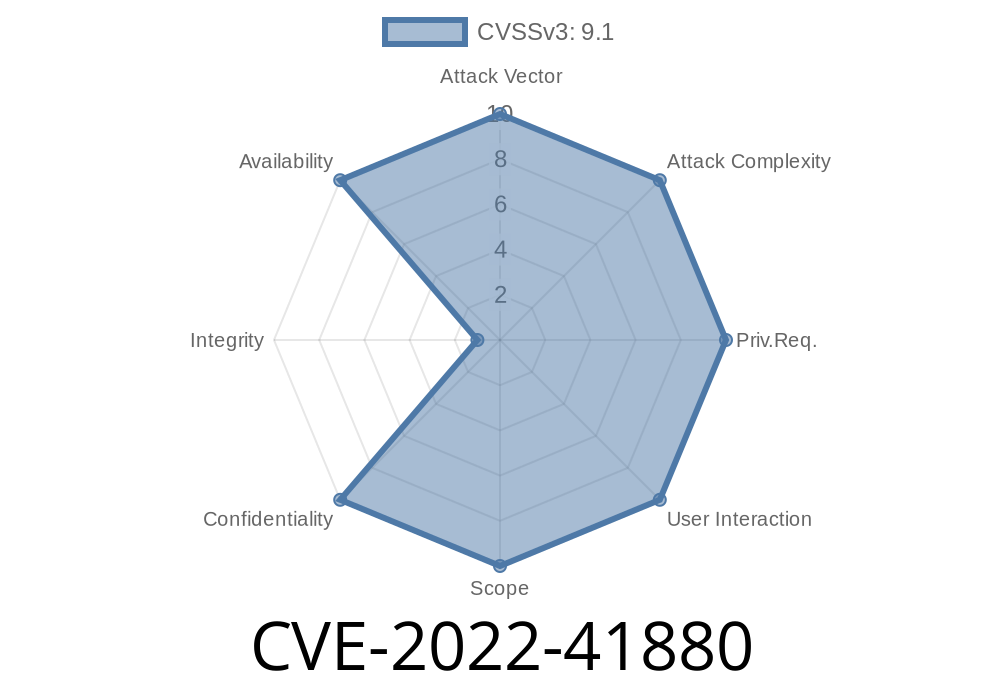

TF 2.10.0 - TF 2.9.3 - TF 2.8.4 - TF 1.13.0 CVE-2022-41880

If you are on these versions, you may want to upgrade as soon as possible. We have also deployed a fix for a different oob read vulnerability in TF 1.13.0, which will be included in TensorFlow 2.11. This vulnerability could cause a server to crash if a user visited a malicious website when using a vulnerable version of TF. You can upgrade to TensorFlow 1.13.0 to protect your server from these vulnerabilities. We have patched this issue in GitHub commit 2ad4b3e15f0989a7f5aac0b28f76d25a4e2e63. The fix will be included in TensorFlow 2.11. We will also cherry-pick this commit on TensorFlow 2.10.1, 2.9.3, and TensorFlow 2.8.4, as these are also affected and still in supported range.

TF-IDF: Y Hassle and NNEF in TensorFlow

CVE-2022-41880 is a oob read vulnerability in TF 1.13.0 that could cause a server to crash if a user visited a malicious website while using this version of TF. You can upgrade to TensorFlow 1.13.0 to protect your server from these vulnerabilities.

We have patched this issue in GitHub commit 2ad4b3e15f0989a7f5aac0b28f76d25a4e2e63. The fix will be included in TensorFlow 2.11 and our cherry-picks of the commit will be released soon: TensorFlow 2.10.1, TensorFlow 2.9.3, and TensorFlow 2.8.4 are also affected and still in supported range, so update as soon as possible!

Timeline

Published on: 11/18/2022 22:15:00 UTC

Last modified on: 11/22/2022 21:52:00 UTC

References

- https://github.com/tensorflow/tensorflow/security/advisories/GHSA-8w5g-3wcv-9g2j

- https://github.com/tensorflow/tensorflow/blob/master/tensorflow/core/kernels/candidate_sampler_ops.cc

- https://github.com/tensorflow/tensorflow/commit/b389f5c944cadfdfe599b3f1e4026e036f30d2d4

- https://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2022-41880