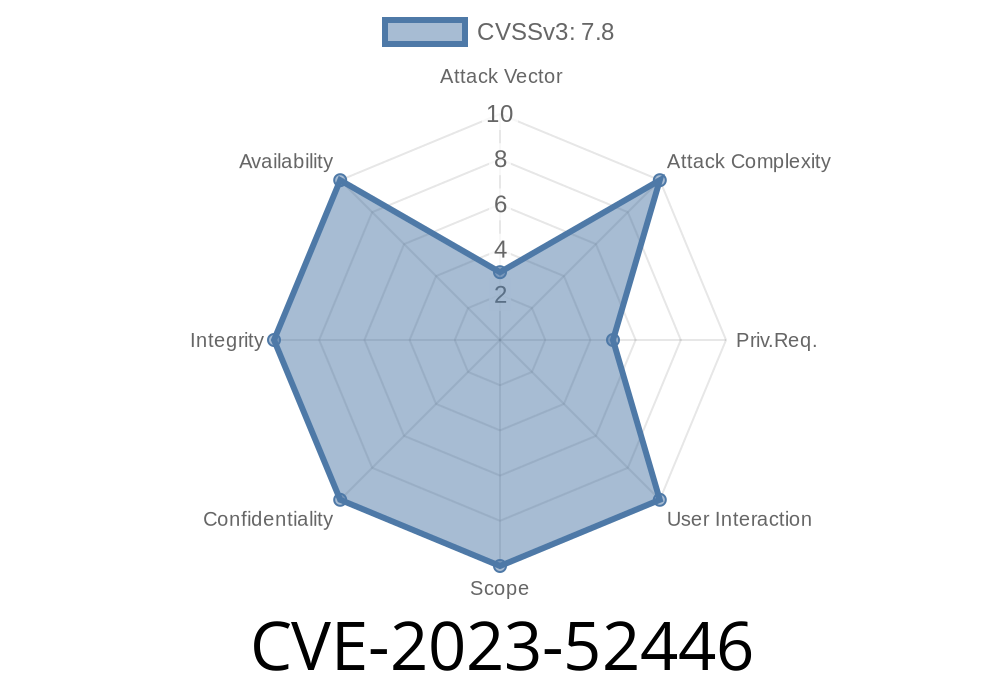

In December 2023, a critical vulnerability surfaced in the Linux kernel involving the eBPF (extended Berkeley Packet Filter) subsystem. Labeled CVE-2023-52446, this flaw is subtle but dangerous: it comes from a race condition between btf_put() and map_free(), leading to a possible use-after-free in kernel memory. This post demystifies the bug, shows how it can be triggered and (in some environments) exploited, and how it was fixed.

What’s the Problem?

The eBPF subsystem allows userspace programs to load code into the kernel for processing network packets, observability, and more. For verification and safety, eBPF relies on “BTF” — BPF Type Format — which involves dynamic memory management and reference-counting.

The race condition in question:

When one thread calls btf_put() (to decrement and maybe free a BTF object) while another thread is still using the BTF from a BPF map, the pointer can become invalid. If map_free() later tries to access that memory, the kernel may end up using a freed slab object: a textbook use-after-free.

btf_put(): Decreases reference count, possibly triggering btf_free() and freeing memory.

2. map_free(): Called to delete a BPF map, during which it tries to access its stored BTF object, now possibly gone.

The real trouble surfaces when kernel task scheduling interleaves these two paths just right: by the time map_free() refers to the BTF object, another thread or workqueue might have already invoked btf_put() and deallocated it.

This manifests at runtime with slab-use-after-free errors, often reported by KASAN (Kernel Address Sanitizer):

[ 1887.184724] BUG: KASAN: slab-use-after-free in bpf_rb_root_free+x1f8/x2b

[ 1887.185599] Read of size 4 at addr ffff888106806910 by task kworker/u12:2/283

...

[ 1887.194004] ? bpf_rb_root_free+x1f8/x2b

...

The problematic function, bpf_rb_root_free, looks something like this (simplified)

static void bpf_rb_root_free(struct bpf_map *map)

{

struct btf_record *rec = map->btf;

if (rec && rec->refcount_off >= && ...) {

// Use after free here, if rec is gone!

if (--rec->refcount == )

kfree(rec);

}

...

}

But if (in another thread) btf_put() was already called and freed rec, this code now references invalid memory.

Here’s a relevant excerpt from a real-life crash log (cleaned up for clarity)

[ 1887.185599] Read of size 4 at addr ffff888106806910 by task kworker/u12:2/283

...

[ 1887.194004] ? bpf_rb_root_free+x1f8/x2b

[ 1887.195668] bpf_rb_root_free+x1f8/x2b

[ 1887.198319] bpf_obj_free_fields+x1d4/x260

[ 1887.198883] array_map_free+x1a3/x260

[ 1887.199380] bpf_map_free_deferred+x7b/xe

...

This stack trace shows that freeing a map (bpf_map_free_deferred) called bpf_rb_root_free, which then tripped KASAN by accessing already-freed memory.

Example Vulnerable Code Path

// Thread 1: Free up BTF

btf_put(map->btf); // refcount goes , memory is freed

// Thread 2: Delayed cleanup

bpf_map_free_deferred() {

bpf_rb_root_free(map); // uses map->btf, which may now be stale

}

If thread 1 wins the race, thread 2 will dereference a dangling pointer.

Escalate to full kernel code execution, depending on heap layout and system protections

In practice, successful exploits would be non-trivial but feasible in containerized or cloud environments where eBPF maps are exposed to untrusted users.

NOTE:

As with most kernel UAF bugs, actual “exploits in the wild” are not public, but the primitive enables a class of privilege escalations.

How Was It Fixed?

The patch (see commit 501f09f06d44) ensures that the BTF object is only freed after all references — including those held by BPF maps — are gone. The fix is to keep proper ownership until all using maps are fully destroyed.

The BTF reference is moved to be managed by the struct bpf_map lifecycle.

- map->btf is set to NULL immediately after it's no longer in use, before freeing, thus serializing the teardown and preventing the race.

Links and References

- Official Patch in Linux Kernel Git

- KASAN: Kernel Address Sanitizer Documentation

- LKML report: bpf: Fix a race condition between btf_put() and map_free()

Below is a simple C pseudocode for causing the bug (do not use on production systems)

// spawn two threads:

// 1. create and destroy a bpf map rapidly

// 2. at the same time, destroy btf objects used by the map

void* thread1(void* arg) {

for (int i = ; i < 10000; ++i) {

int map_fd = bpf_create_map(...); // map with custom btf

close(map_fd);

}

}

void* thread2(void* arg) {

for (int i = ; i < 10000; ++i) {

bpf_destroy_btf(...);

}

}

// In main():

pthread_t t1, t2;

pthread_create(&t1, NULL, thread1, NULL);

pthread_create(&t2, NULL, thread2, NULL);

pthread_join(t1, NULL);

pthread_join(t2, NULL);

You can trigger KASAN/BUG splats in debug kernels with this execution pattern.

Final Thoughts

CVE-2023-52446 is a classic example of how subtle reference counting and concurrency bugs in kernel-land can turn into powerful primitives for exploitation. While the fix is straightforward once the bug is discovered, the vulnerability lived unnoticed in upstream for some time. Always keep your kernels updated — and if you’re doing eBPF development, ensure you’re on a version post-patch.

*If you want more deep-dives into modern kernel bugs, follow the linux-distros security list or keep track of LKML.*

Timeline

Published on: 02/22/2024 17:15:08 UTC

Last modified on: 03/14/2024 19:47:14 UTC