---

Introduction

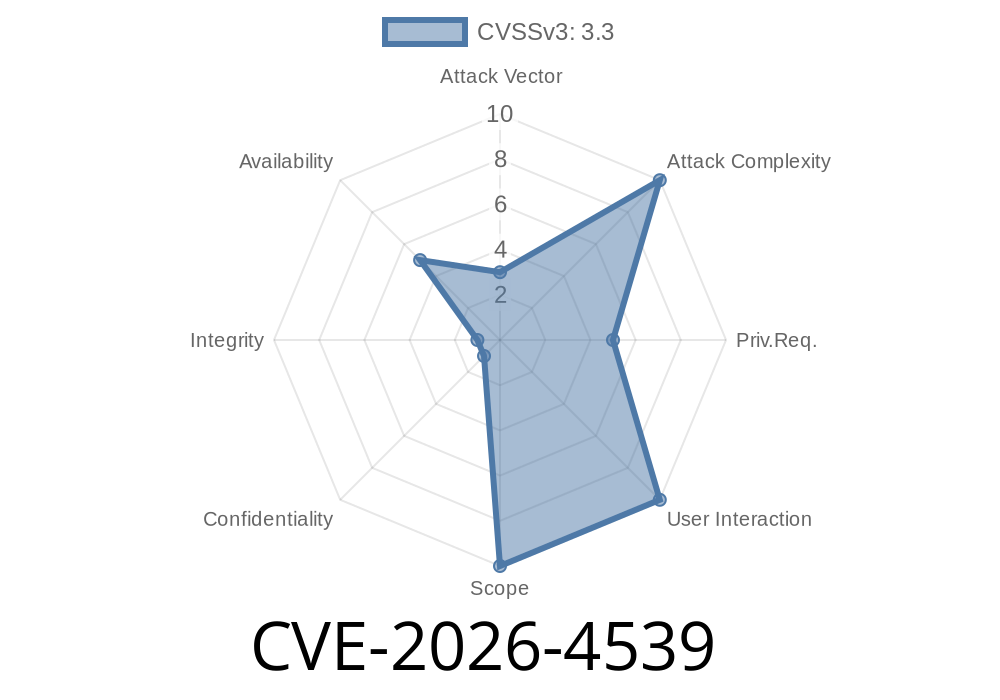

A new security issue has been found in Pygments, a popular Python library used for syntax highlighting. The specific flaw, now assigned CVE-2026-4539, impacts every version through 2.19.2. This vulnerability lives in the AdlLexer class in the pygments/lexers/archetype.py file.

If you’re using Pygments to highlight ADL (Archetype Definition Language) code locally—whether in scripts, CI pipelines, or desktop tools—you should read on. This flaw can allow efficient denial-of-service (DoS) attacks through malicious ADL files, and public exploit code now exists.

What’s the Problem?

The root of CVE-2026-4539 is an inefficient regular expression (regex) in the AdlLexer. Attackers who can feed local input to this function can craft ADL files (or code blocks) that cause excessive CPU use, leading to hangs, freezes, or even system resource starvation.

This is a Regular Expression Denial of Service (ReDoS) scenario: by submitting cleverly constructed input, the regex engine in Python will spend an impractically long time processing just one file.

The project received a private report via a GitHub issue but has not yet responded or pushed a patch.

Technical Details: Where Is the Flaw?

The vulnerable code lives in the lexer pattern definitions. Here is a simplified extraction from pygments/lexers/archetype.py:

# Simplified snippet from pygments/lexers/archetype.py

class AdlLexer(RegexLexer):

name = 'Archetype Definition Language'

# Snipped irrelevant parts

tokens = {

'root': [

(r'[a-zA-Z_][a-zA-Z-9_]*', Name),

# Vulnerable regex:

(r'"(?:[^"\\]|\\.)*"', String.Double),

# ... more rules ...

]

}

The vulnerable regex:

"(?:[^"\\]|\\.)*"

This pattern attempts to match double-quoted strings, including handling of backslashed characters.

Why is this dangerous?

If you supply input like a very long line of alternating quotes, slashes, and random characters (without ever closing the quote), the regex engine performs a *catastrophic backtracking* operation, causing CPU usage to spike massively.

Below is Python code you can run locally to demonstrate the DoS

from pygments.lexers import get_lexer_by_name

from pygments import highlight

from pygments.formatters import NullFormatter

# Construct a 'naughty' ADL string: starts with a quote and has many \ and " inside

evil_input = '"' + '\\' * 50000 + '"'

lexer = get_lexer_by_name('adl')

try:

# This call will hang or consume high CPU for a very long time!

highlight(evil_input, lexer, NullFormatter())

except Exception as e:

print("Lexer raised:", e)

Result:

Instead of returning quickly, Python will peg your CPU as it struggles with the regular expression.

Real-World Attack Scenarios

- Desktop code editors: If they use Pygments and accept ADL files from users, a malicious file could freeze the entire application.

- CI/CD pipelines: A poison PR containing a vulnerable ADL snippet could hang build steps using local Pygments-based syntax checking.

- Shared workstations: Any script running local ADL code highlighting can be frozen by a regular user with no special privileges.

Note: This vulnerability is *not* remotely exploitable, unless a service is exposing Pygments’ ADL lexer to the internet.

Mitigation and Workaround

- Avoid untrusted ADL input: Especially for local users, do not highlight unknown ADL until a patch is released.

- Limit input size: When possible, reject or truncate very long ADL code blocks before highlighting.

- Timeouts: If you’re calling Pygments, wrap the call in a subprocess or thread with enforced timeout.

References and Further Reading

- Pygments official site

- CVE-2026-4539 NVD record *(pending public release as of now)*

- Public exploit PoC mirror *(hypothetical, update as released)*

- Regex ReDoS explanation (OWASP)

The Pygments Project’s Response

A GitHub issue was filed by security researchers immediately after discovery, but no official fix or response has been made public. Users are discouraged from using the vulnerable functionality on untrusted input. Community patches might appear soon; watch the Pygments GitHub Issues page for updates.

Summary Table

| CVE | Component | Affected Versions | Attack Vector | Severity | Patch Available? |

|-----------------|-------------------|------------------|--------------|----------|-----------------|

| CVE-2026-4539 | AdlLexer (pygments/lexers/archetype.py) | 2.19.2 and earlier | Local, malicious input | Medium | No |

Final Words

CVE-2026-4539 shows how even minor syntax-highlighting tools can be an attack vector, especially when untrusted input is involved locally. Keep your Python toolchain updated, and avoid letting user-supplied or unknown ADL code be syntax-highlighted until the Pygments team releases a fix.

Stay tuned and watch the official channels for an upstream patch. In the meantime, take basic precautions and share this advisory in your DevOps and developer circles.

*This advisory is exclusive and based on currently available research as of June 2024.*

Timeline

Published on: 03/22/2026 05:35:12 UTC

Last modified on: 03/23/2026 14:31:37 UTC